You See it, You Got it: Learning 3D Creation on Pose-Free Videos at Scale

You See it, You Got it: Learning 3D Creation on Pose-Free Videos at Scale

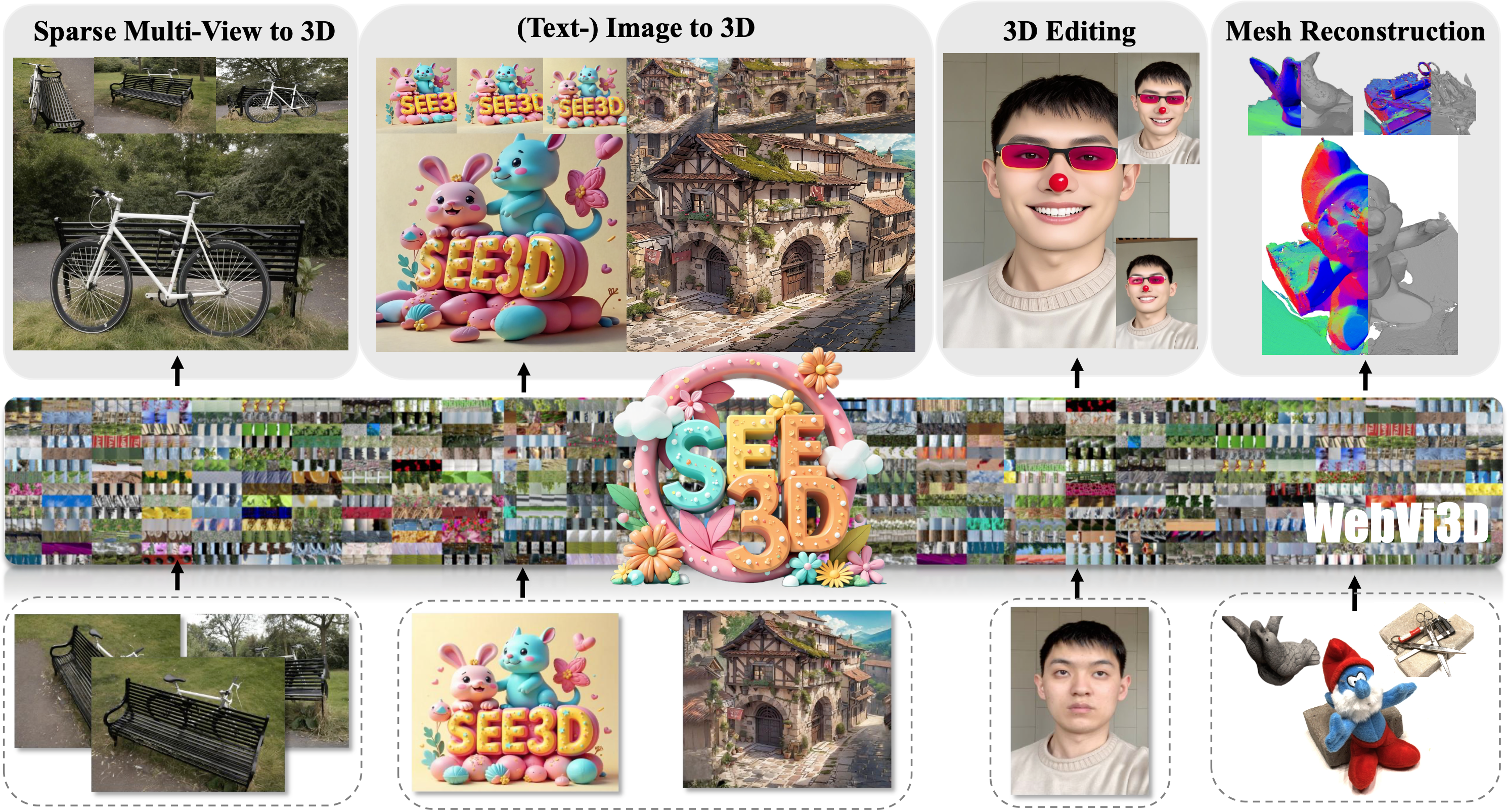

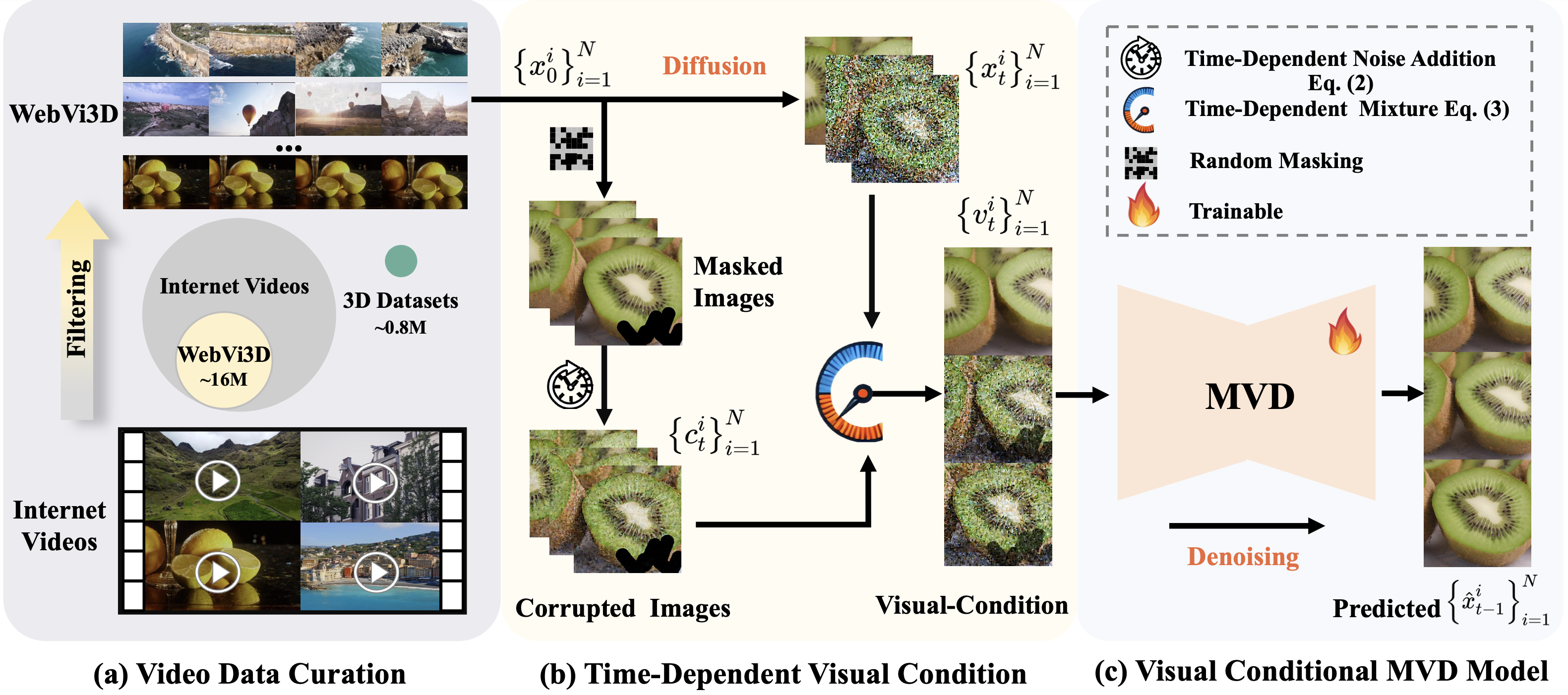

Recent 3D generation models typically rely on limited-scale 3D `gold-labels' or 2D diffusion priors for 3D content creation. However, their performance is upper-bounded by constrained 3D priors due to the lack of scalable learning paradigms. In this work, we present See3D, a visual-conditional multi-view diffusion model trained on large-scale Internet videos for open-world 3D creation. The model aims to Get 3D knowledge by solely Seeing the visual contents from the vast and rapidly growing video data - You See it, You Got it. To achieve this, we first scale up the training data using a proposed data curation pipeline that automatically filters out multi-view inconsistencies and insufficient observations from source videos. This results in a high-quality, richly diverse, large-scale dataset of multi-view images, termed WebVi3D, containing 320M frames from 16M video clips. Nevertheless, learning generic 3D priors from videos without explicit 3D geometry or camera pose annotations is nontrivial, and annotating poses for web-scale videos is prohibitively expensive. To eliminate the need for pose conditions, we introduce an innovative visual-condition - a purely 2D-inductive visual signal generated by adding time-dependent noise to the masked video data. Finally, we introduce a novel visual-conditional 3D generation framework by integrating See3D into a warping-based pipeline for high-fidelity 3D generation. Our numerical and visual comparisons on single and sparse reconstruction benchmarks show that See3D, trained on cost-effective and scalable video data, achieves notable zero-shot and open-world generation capabilities, markedly outperforming models trained on costly and constrained 3D datasets. Additionally, our model naturally supports other image-conditioned 3D creation tasks, such as 3D editing, without further fine-tuning.

Overall Framework of See3D. (a) We propose a four-step data curation pipeline to select multi-view images from Internet videos, forming the WebVi3D dataset, which includes \(\sim\)16M video clips across diverse categories and concepts. (b) Given multiple views, we corrupt the original data into corrupted images \(c_{t}^{i}\) at timestep \(t\) by applying random masks and time-dependent noise. We then reweight the guidance of \(c_{t}^{i}\) and the noisy latent \(x_{t}^{i}\) for the diffusion model to form visual-condition \(v_{t}^{i}\) through a time-dependent mixture. (c) MVD model is capable of training at scale to generate multi-view images conditioned on \(v_{t}^{i}\), without requiring pose annotations. Since \(v_{t}^{i}\) is a task-agnostic visual signal formed through time-dependent noise and mixture, it enables the trained model to robustly adapt to various downstream tasks.

The results shown are rendered by 3D Gaussian Splatting, which is trained by multi-view generation from See3D with three sparse views as input. More qualitative and quantitative results with 3, 6, 9 views input can be found at Supplementary Material.

The results shown are derived directly from See3D with single view as input. The frist frame of the shown video is the reference view.

The frist frame of the shown video is the reference view.